Project: Deep Learning Methods for Robots in Industry 4.0

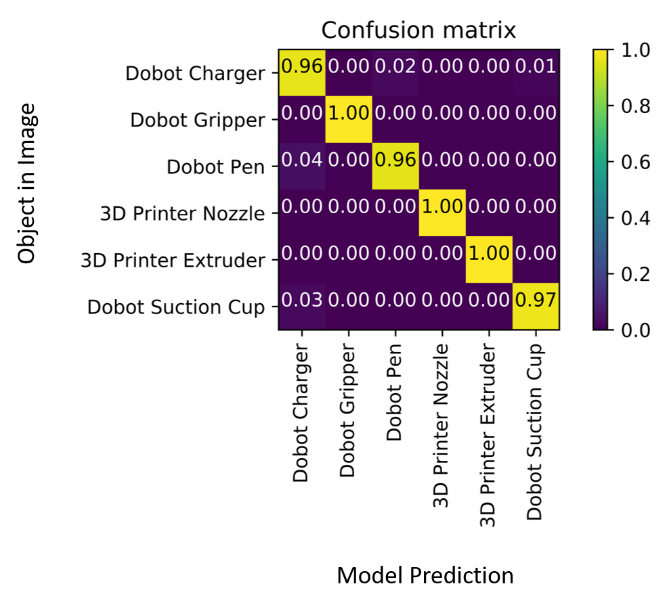

Realizing automatic inventory management in warehouses needs to efficiently identify items. Barcodes and RFID tags are traditional approaches to solve this problem, but both suffer from limitations. This research paper presents a 2D camera vision-based method using a deep convolutional neural learning network to sort different items stored in a warehouse for the purpose of inventory management. Employed method ResNet-50 uses a combination of residual learning and deep architecture parsing called residual network architecture. Running at 4 frame per seconds (FPS) it can achieve a high accuracy of 98.94% on the dataset created by authors consisting of 1450+ images of machine parts, by utilizing data augmentation and transfer learning making it suitable for other real-time applications as well. It achieved high accuracy of 98.94%on the dataset created by the authors. It is presently implemented on a multifunctional 3 DoF robotic arm for validation. More advanced deep learning frameworks such as Mask RCNN is under testing to perform the task of classification, localization and segmentation using a single network.

Article(s):

Pratyaksh P. Rao, Abhra Roy Chowdhury, “Learning to Listen and Move: An Implementation of Audio-Aware Mobile Robot Navigation in Complex Industrial Environment”, IEEE International Conference on Robotics and Automation (ICRA), Philadelphia, USA, 2022 (Accepted)

Pratyaksh P. Rao, Kaustubh Joshi, Abhra Roy Chowdhury, “Deep Audio-Visual Learning Based Action Prediction in A Human-Robot Coordinated Task” Technical Research Poster, IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), Prague, Czech Republic, Sep 2021.

Anubhav Patel, Abhra Roy Chowdhury, “Vision based Object Sorting using Deep Learning for Inventory Tracking in Automated Warehouse Environment”, 20th International Conference on Control, Automation and Systems (ICCAS), Busan, Korea 2020 (Best Paper Finalist)

Anubhav Patel, Abhra Roy Chowdhury, “Vision-based Object Classification using Deep Learning for Mixed Palletizing Operation in an Automated Warehouse Environment”, Advances in Manufacturing: Processes & Systems, Springer, 2021 (Accepted)

Videos:

Deep Learning in Robot Vision for Smart Manufacturing Click here.

Robot Vision based Classification in Smart Manufacturing Click here.

Gear Defect Detection with Robot Vision Click here.